Wronskian

1. Derivative of a determinant

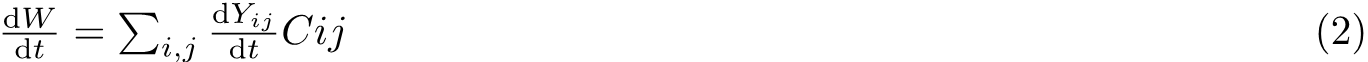

Consider the determinant W(t) of a n×n matrix Y which each element is a function of t. Assume elements to be independent variables. Then we could write:

![]()

Where Cij are the corresponding cofactors. Thus we have:

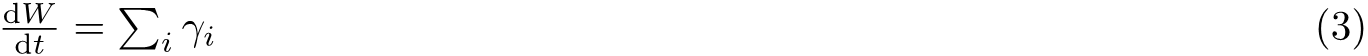

Let’s define γi as the new matrices form by substituting the ith row with its derivative. Then we could write (2) in a tidier form:

2. Abel-Jacobi-Liouville identity

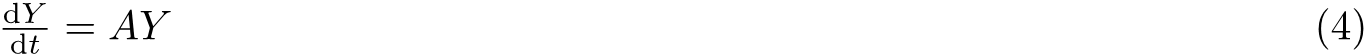

As we know, any system of linear ordinary equations can be extracted in to a single linear equation, namely:

And it is followed by that,

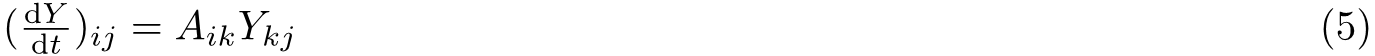

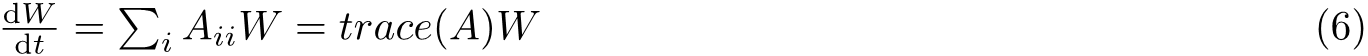

So we obseverve that a particular row of the derivative is the linear combination of the original rows, since for the kth row of Y, different elements on jth column are multiplied by the same factor Aik. So of course, each term on the right hand side of (3) will be W times the corresponding element of Aii.

Thus,

This has resulted in some interesting conclusions. For example if the solutions are independent for any point within the domin, they must be independent entirely.

[Literature: Pontryagain 1962, Chapter 3]