Inside Imperial’s fourth-year Deep Learning course

Professor Bernhard Kainz outlines how Imperial’s fourth-year Deep Learning course combines theory with hands-on experimentation at scale. With more than 600 students taking part this year, the course explores both the foundations of deep learning and emerging approaches in generative and multimodal AI.

Professor Bernhard Kainz outlines how Imperial’s fourth-year Deep Learning course combines theory with hands-on experimentation at scale. With more than 600 students taking part this year, the course explores both the foundations of deep learning and emerging approaches in generative and multimodal AI.

Deep learning continues to evolve at pace, but a strong understanding of its foundations remains essential. In the Department of Computing, students on the fourth-year Deep Learning course engage with both the theoretical foundations and modern frontiers of the field, combining rigorous analysis with hands-on experimentation at scale.

This year, more than 600 students took part under the joint leadership of Professor Bernhard Kainz and Dr Yingzhen Li, with support from Dr Harry Coppock from the UK AI Security Institute (ASI). The course explores core principles of representation learning, modern architectures and optimisation strategies, and integrates classical deep learning with emerging paradigms in generative and multimodal AI.

A central component of the course is the well-established “hot dog, not hot dog” project, inspired by the television series Silicon Valley. Students begin by developing robust classifiers to distinguish between hot dog and non-hot dog images, addressing challenges such as dataset bias, distribution shift and generalisation.

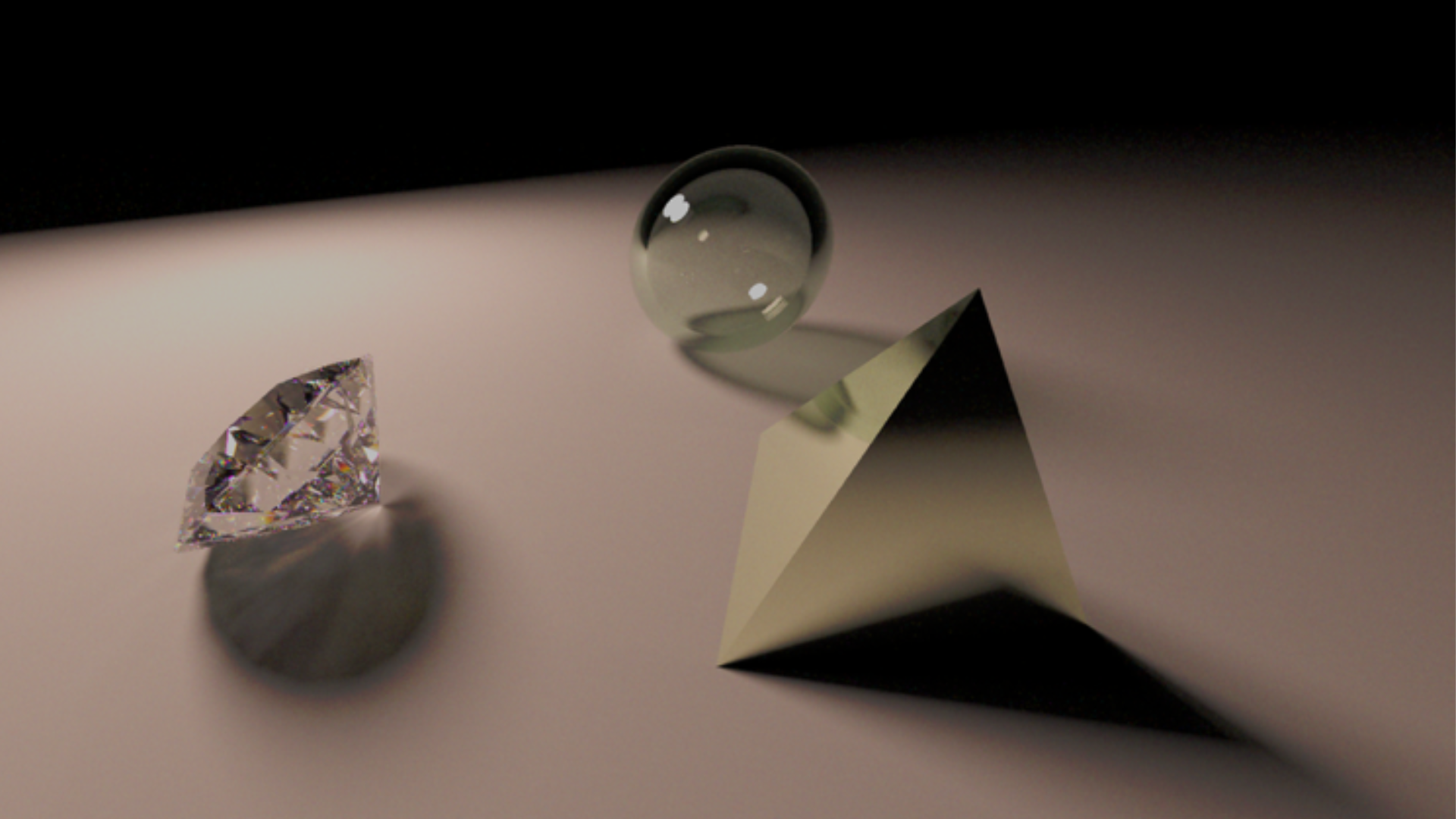

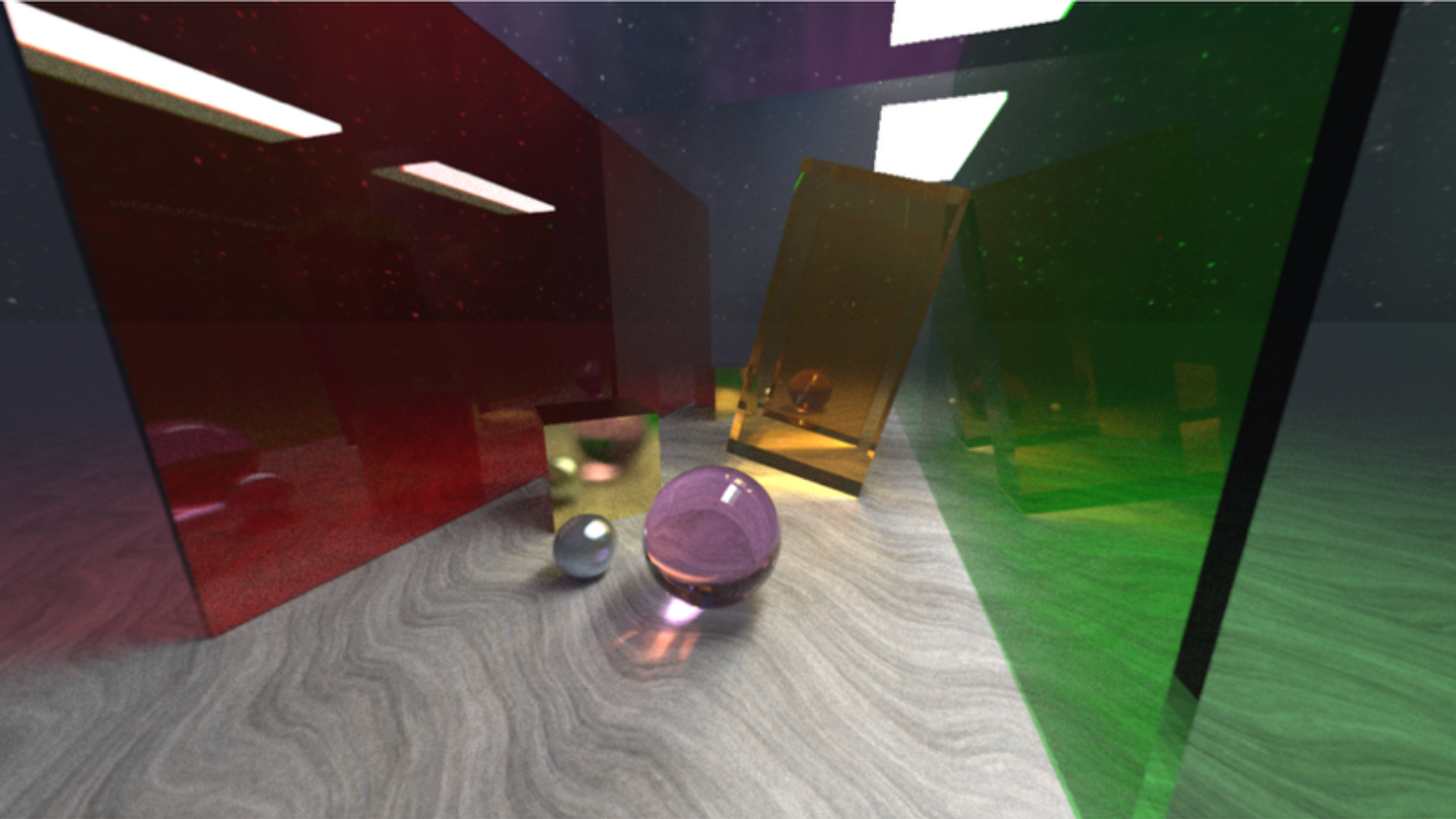

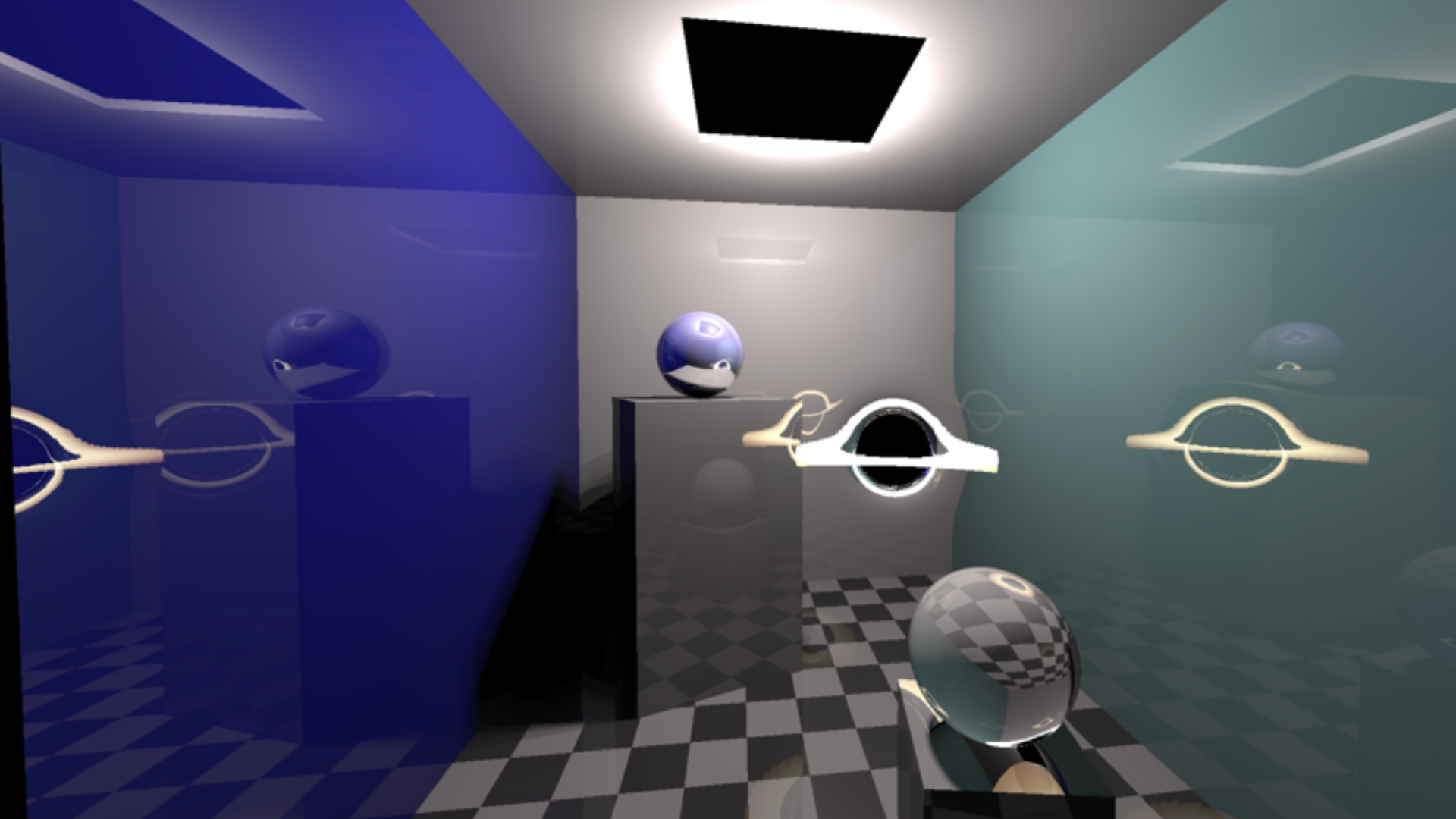

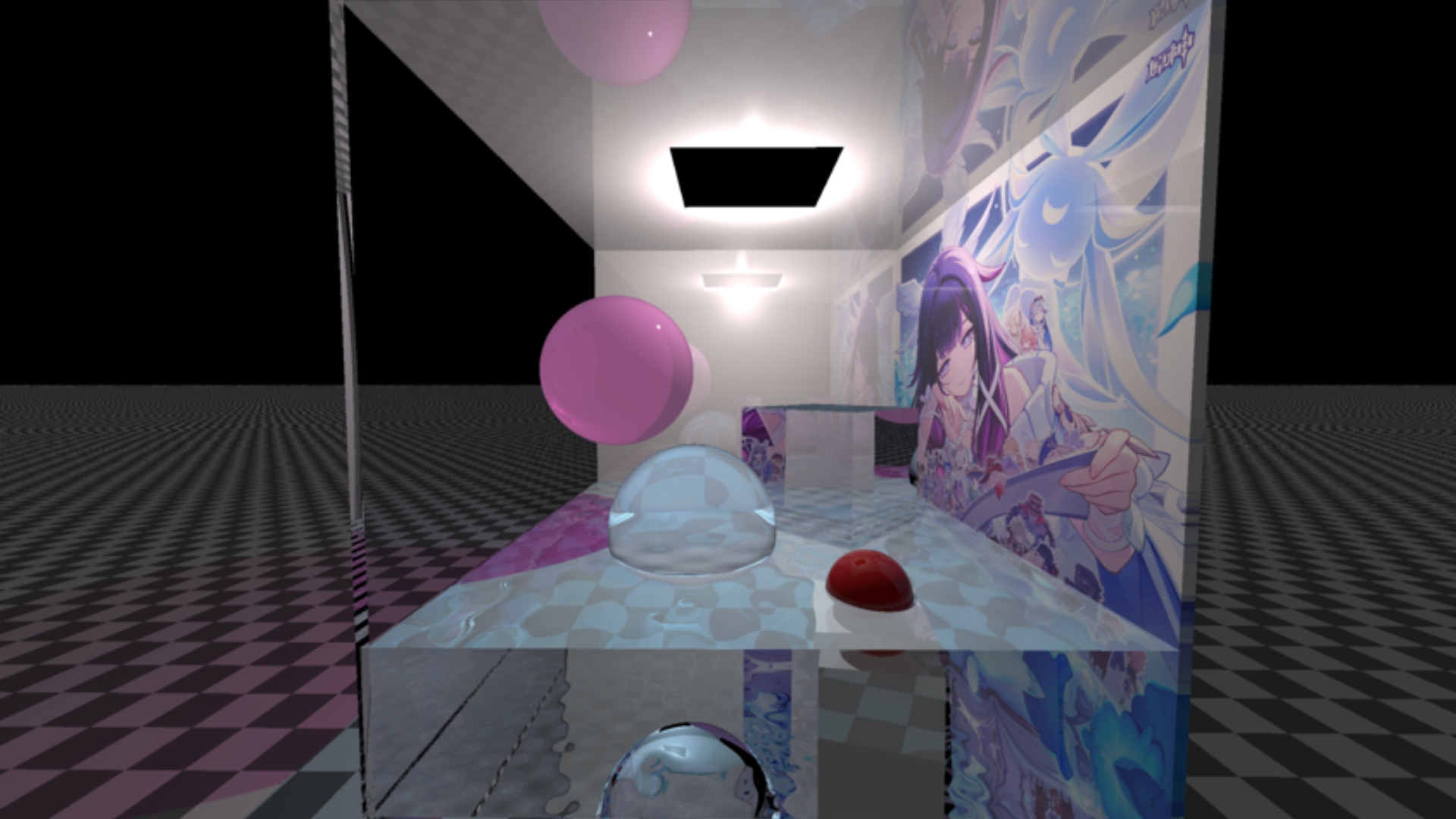

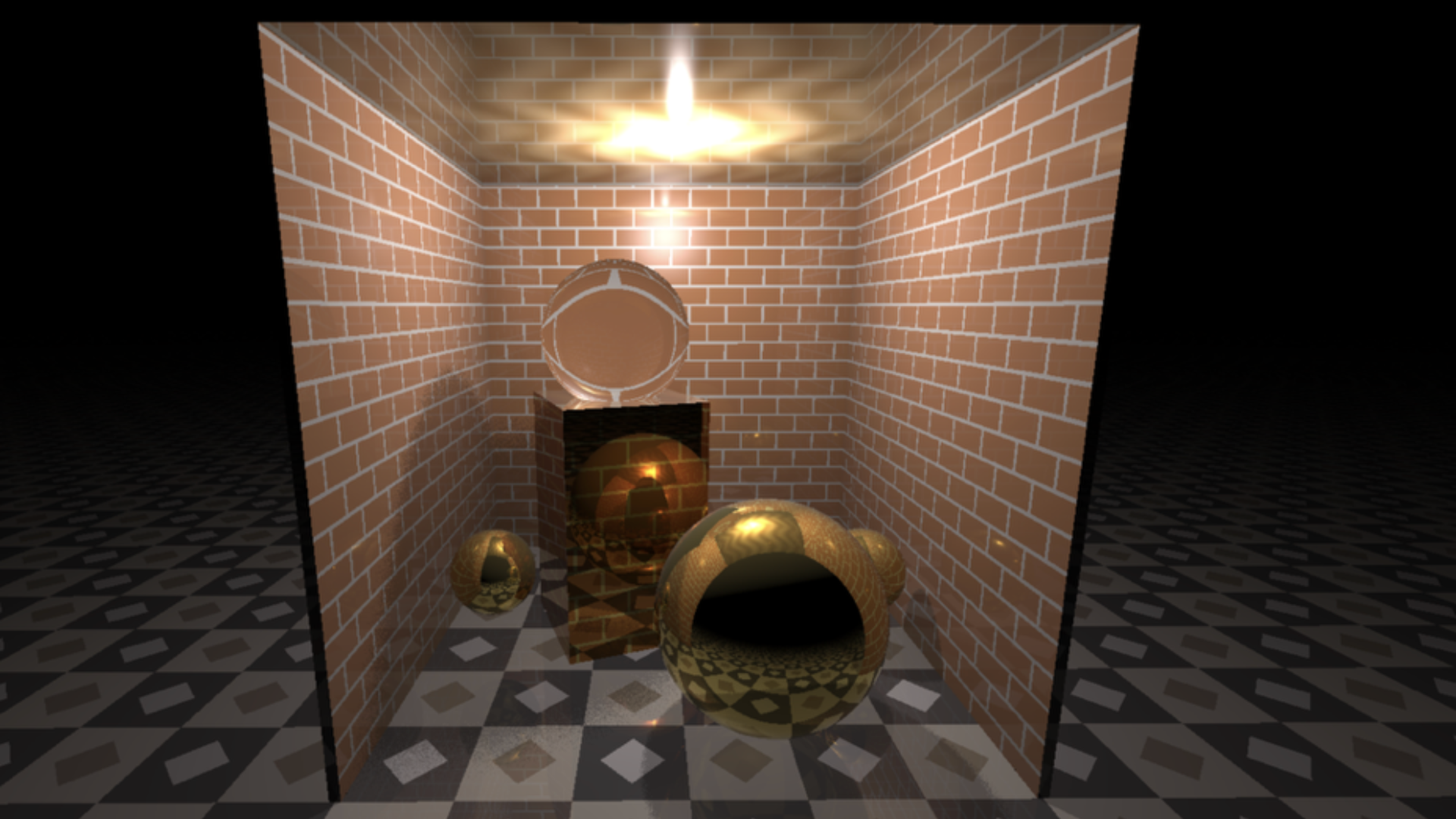

Building on this work, students then extend their models to generate entirely new images, transitioning from discriminative to generative modelling. Through this process, they are introduced to advanced concepts including diffusion-based generative models, data-centric AI and the limitations of synthetic data.

Although playful in appearance, the project is designed to operate under realistic computational constraints. Students typically work in single-GPU environments, encouraging efficient model design and careful experimentation rather than brute-force scaling. This setup supports practical engagement with how models learn, fail and generalise.

This year’s submissions included strong examples of resource-efficient diffusion models capable of synthesising high-quality images from noise. These approaches reflect techniques that are now widely accessible through large language model interfaces provided by organisations such as OpenAI, Google and Anthropic.

Given the size of the cohort, separate winners were selected for each course stream following a vote conducted by the 25 graduate teaching assistants supporting the course.

Project winners

COMP60034: Tom Shtasel

COMP70010: Harvey Densem

The project was led by Hanna Tolle and Carles Balsells Rodas, whose coordination supported the delivery of the course at scale.

The winners were awarded API credits for the large language model of their choice, supporting continued exploration of modern AI systems beyond the course.