Why Open Access reporting is difficult (Imperial College London 2014/15 RCUK Open Access report)

Earlier today Imperial College London submitted its open access compliance report to RCUK. Like most UK universities, the College is in receipt of an annual open access block grant from RCUK. The funds are made available to support universities in meeting the requirements of the RCUK open access policy, in particular meeting the cost of article processing charges (APC) to make articles open access through the publisher. RCUK allocate funds in relation to research effort and Imperial College receives the second largest grant – £1,353,480 for 2014/15 (Cambridge is #1 with £1,355,073). The report, based on a template developed by Jisc, details how the money has been spent and provides headline compliance figures. It has been put together by the College Library and the Research Office, with support from ICT.

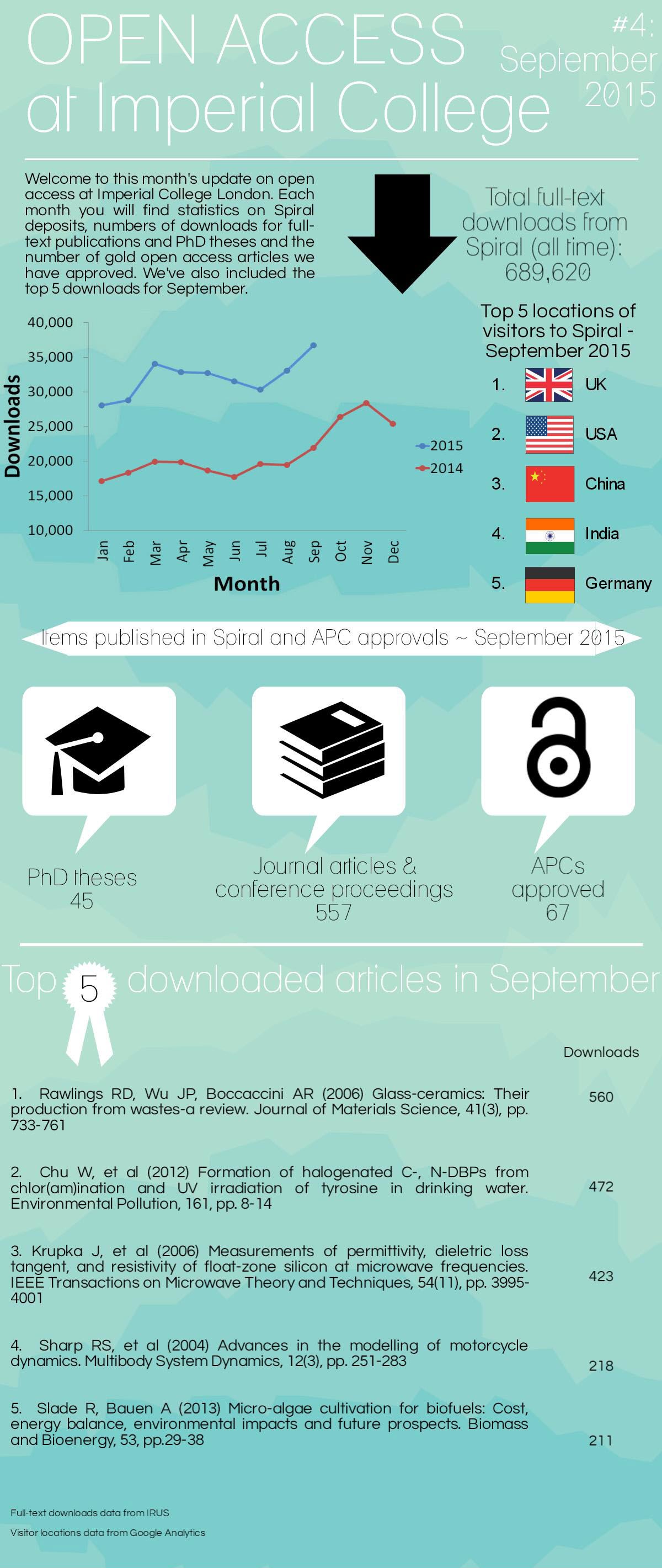

You can access the report via Spiral, the College repository.

The headline figure is an estimated 31% compliance via the gold and 38% compliance via the green route; we also provide details on APCs for 350 open access articles processed by the College Library. However, before you delve further into the spreadsheet or start comparing these figures to other universities I would like to draw your attention to some of the inherent issues with these reports and figures.

First of all you may notice that the numbers do not seem to add up. We report an APC spend of £597,029 and yet the 350 APCs add up to £679,721.08. The reason for this apparent mismatch is that the first figure is for the period from April 2014 to March 2015, as requested in the spreadsheet, whereas the APCs are reported to RCUK until August 2015.

Secondly, the number of APCs does not equal 31% of the outputs we report on. This is because some of the articles originating from RCUK funding have been paid for by other institutions, usually because the principal investigator was based there and not at Imperial College.

Most importantly though I would caution against directly comparing compliance figures between universities – unless you know exactly how they have been calculated. The biggest challenge, especially for large research intensive universities, is establishing what 100% is: how many outputs are related to RCUK funding? Currently there is no reliable way to derive funder information from article metadata, even where authors report the funders to the publisher. RCUK-funded authors are asked to report outputs to the research councils, but the reporting period does not overlap with the OA reporting period. That means even if all authors would reliable link all outputs to all relevant grants (this is a manual process) the information would not be sufficient to report on. Earlier this year Imperial College introduced a new workflow (for depositing outputs on acceptance) that encourages authors to link outputs and funding, but it will be a while until we can be reasonably confident that close enough to 100% of outputs are linked to all relevant grants.

Why do we not just manually go through all articles and speak to the authors? It is a question of scale – College academics publish between 10,000-12,000 articles and conference proceedings per year. We estimate that some 4,000 of these outputs may be linked to RCUK funding.

So how did we come to the compliance figures reported to RCUK? We analysed a sample of some 1,500 outputs we know to be linked to RCUK funding. Sadly, there is currently no reliable way to automatically establish the open access status of an output as publishers do not usually add licence information to output metadata and tracking outputs in repositories also creates problems. We do of course know how many outputs the College Library paid an APC for and also which outputs were deposited into the College repository Spiral. We do not know where other universities have paid an APC for an article, or where an author may have used departmental or other funds to pay an APC.

We were able to identify additional open access outputs by cross-referencing our data with the list from the Directory of Open Access Journals (DOAJ) and the Europe PubMed Central database. Even so we will have missed outputs, for example papers deposited into repositories like arXiv. We do track arXiv deposits, but there is currently no way of telling what version has been deposited. Even if we knew the version, deposits in repositories pose another problem: where an APC has been paid and the output deposited, do we report it as green or gold OA? In the case of RCUK we have decided to mark it as gold, as that is the preferred route for the UK research councils, but others may have decided differently.

I could go on much longer, but I hope the above gives you an idea of the issues that universities face when reporting on open access. Should you still want to compare university open access reports, make sure to check the data source and methods. The good news is that in the future these reports should become more meaningful, in particular when publishers and system vendors add funder, institutional and author identifiers (such as ORCID) to output metadata.

Finally, I would like to highlight two issues we raised with RCUK when submitting the report:

Many points made by the College in last year’s submission regarding policy implementation are still valid (see paragraphs 35 ff.). The College has made good progress in delivering support infrastructure (significantly reducing processing time for gold and green OA), but concerns about the wider policy landscape and publisher support for open access remain. In particular, we would like to highlight two points:

-

Hybrid open access remains significantly more expensive than full OA (~50% more per APC), even without taking into account “double dipping”. Processing APCs for hybrid journals continues to require more resource, i.e. in relation to licensing and invoicing. The Finch report saw hybrid as a means of transitioning from a subscription to a full OA model, but there is very little evidence of that transition taking place. The majority of OA funds are still spent on hybrid.

-

Differences in funder policies make it harder for academics to understand how to comply and increase the workload for support services. RCUK is encouraged to harmonise policy requirements with other funders, in particular with the Policy for open access in the post-2014 Research Excellence Framework. We note that HEFCE have made changes to align policies with regards to gold OA and we would encourage RCUK to consider a similar step for green OA.